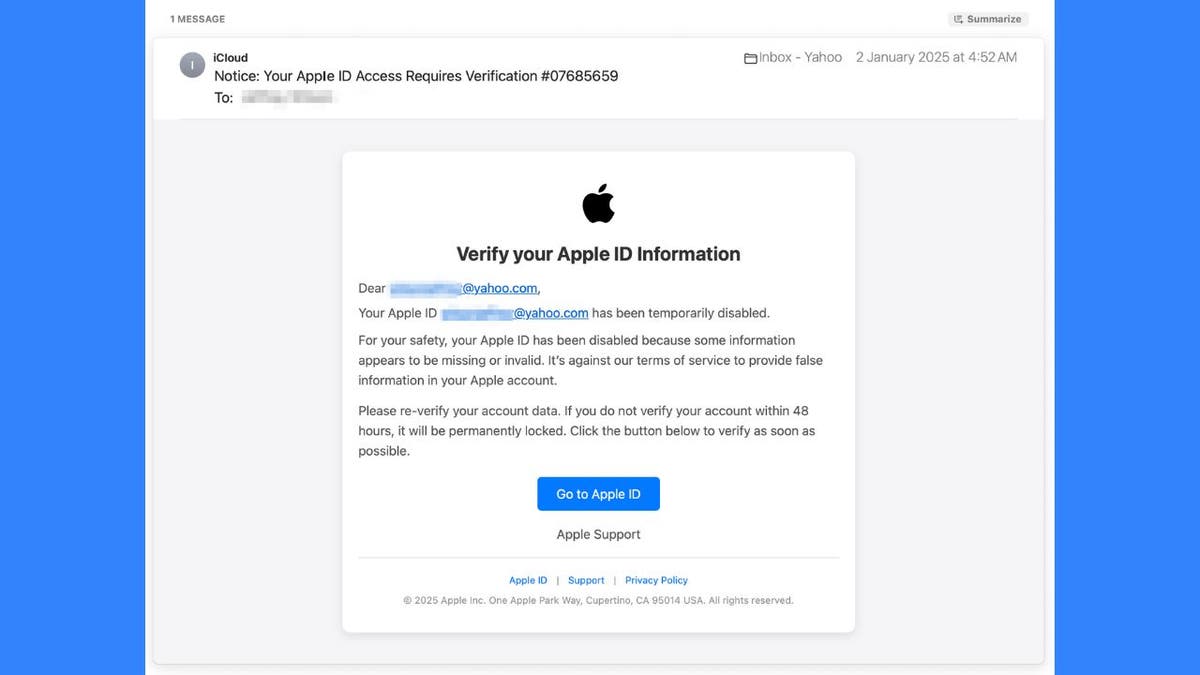

A recent tragedy in Japan has ignited a debate about the use of artificial intelligence (AI) in child protection services. A four-year-old girl died while in her mother's care, despite authorities consulting an AI program designed to assess risk. The program calculated a 39% chance of the child needing protection, and officials opted not to intervene further, given the mother's apparent cooperation with child guidance experts. This decision proved fatal, and the mother is now facing charges related to her daughter's death.

Mie Prefecture Governor Katsuyuki Ichimi acknowledged the use of AI in the decision-making process, but emphasized that the final judgment rested with human officials. He expressed concerns about the methodology employed and announced plans for an independent review by external experts to assess the system's future use.

The AI program, implemented in 2020, was trained on thousands of cases and intended to alleviate the workload on child consultation centers. In this case, the program considered factors such as the mother's willingness to accept guidance and scheduled visits from officials. However, the child consultation center reportedly failed to follow up with the family for an extended period, even after the girl's prolonged absence from daycare.

The Mie Prefecture government building in Tsu City. (Google Maps)

Japan has been exploring the integration of AI in childcare for several years. (Buddhika Weerasinghe/Getty Images)

This incident raises serious questions about the appropriate use of AI in sensitive areas like child welfare. While AI can assist in processing large amounts of data and identifying potential risks, it cannot replace human judgment and empathy. The case highlights the need for careful consideration of the limitations of AI, robust oversight mechanisms, and a continued emphasis on human intervention in critical decisions involving children's safety.

Japan's exploration of AI in childcare dates back several years, with initiatives focusing on tracking children's health indicators and development. However, this tragic case underscores the importance of responsible implementation and continuous evaluation to ensure that such technologies enhance, rather than compromise, child welfare.